Your Innovation Lab Is Theater: How to Build Real Ventures

Most corporate innovation labs produce tours, demos, and annual reports — not ventures. Here's how to tell if yours is theater, why it keeps happening, and what real venture building actually looks like.

I've been invited to tour a lot of innovation labs. They usually have exposed brick walls, a 3D printer nobody uses, and a wall of sticky notes from a workshop that happened eight months ago. There's often a screen showing a dashboard of "innovation metrics" — ideas submitted, hackathon participants, NPS scores from events. What's never on the dashboard: ventures launched, revenue generated, assumptions validated.

These labs aren't building ventures. They're performing innovation for an internal audience.

And the people running them usually know it. They're smart, motivated people trapped in a structure that rewards activity over outcomes. They run workshops because workshops are easy to schedule and easy to report on. They manage idea funnels because idea funnels look impressive in quarterly reviews. They organize hackathons because hackathons generate excitement and photos for the company newsletter.

None of this is venture building. It's innovation theater — and it costs companies millions of euros per year in direct costs, opportunity costs, and the slow erosion of organizational credibility around the word "innovation."

Signs Your Innovation Lab Is Theater

If you're running a corporate innovation program and you're not sure whether it's real or performative, here are the diagnostic questions. Be honest with yourself.

1. You Measure Inputs, Not Outcomes

Your annual report highlights: number of ideas submitted, number of hackathons run, number of employees engaged, NPS score from your last event, number of startups screened.

What it doesn't highlight: number of concepts validated with real customers, revenue from new ventures, ventures that reached product-market fit, ventures that were killed based on evidence (killing ventures based on evidence is a sign of health, not failure).

If your metrics could all go up while the business impact stays at zero, you're measuring theater, not innovation.

2. Nothing Has Been Killed

This is the most reliable signal. If every idea that enters your innovation funnel either "progresses" or quietly fades away, you don't have a venture-building process. You have an idea cemetery with good lighting.

Real venture building kills things. Frequently. Based on evidence. A healthy innovation program has a clear record of concepts that were tested, found lacking, and deliberately stopped. If you can't point to specific ventures you killed and explain why — with data — you're not doing the hard work.

[PERSONAL ANECDOTE: Describe a time when killing a venture early was the right call and the organization learned from it. What was the venture? What evidence led to the kill decision? How did the organization react?]

3. The Team Has Never Talked to a Customer

I wish this were an exaggeration. I've walked into innovation labs where the team has been operating for over a year without a single direct conversation with an end customer. They have personas. They have market research reports. They have journey maps based on assumptions. They do not have a single quote from a real human being who would actually use or pay for what they're building.

If your innovation team's primary interaction with the outside world is through industry reports and startup scouting databases, they're doing desk research, not innovation.

4. Prototypes Are Demos, Not Tests

There's a crucial difference between a demo and a test. A demo shows stakeholders what something could look like. A test puts something in front of real users to learn whether it works.

Innovation theater produces beautiful demos. Polished click-through mockups. Impressive proof-of-concept videos. These are designed to generate internal excitement and budget approval. They're not designed to generate learning.

A real prototype is uglier. It's a functional-enough version of the product that a real user can try to accomplish a real task. It's designed to break in informative ways. The goal isn't to impress — it's to learn.

[PERSONAL ANECDOTE: Describe a prototype you built that was deliberately rough but produced critical learning. What did it look like? What did you learn that a polished demo would never have revealed?]

5. Success Is Surviving Budget Season

The ultimate tell: the innovation lab's primary strategic objective is its own continued existence. Conversations about "demonstrating value to leadership" and "securing next year's budget" dominate the team's attention. The lab produces case studies and impact reports designed to justify its existence rather than to learn from its work.

When self-preservation becomes the program's main output, innovation has been fully replaced by bureaucratic survival.

Why This Keeps Happening

Innovation theater isn't caused by bad people. It's caused by structural incentives that make theater the rational choice for everyone involved.

The Incentive Problem

The executive who sponsors the innovation lab gets credit for "investing in innovation" regardless of outcomes. The visible existence of a lab, a team, a program — that's the deliverable. If it also produces real ventures, great. But the executive's performance review doesn't depend on it.

The team running the lab quickly learns what gets rewarded: activities that are visible, safe, and easy to report. Running a hackathon is visible. Customer discovery interviews are not. Producing a strategy deck is safe. Recommending that a pet project be killed is not. Reporting "47 ideas in the pipeline" is easy. Reporting "we tested 12 ideas and 11 of them don't work" is hard — even though the second report represents far more value.

[PERSONAL ANECDOTE: Describe a situation where you saw the incentive misalignment in action. An innovation team doing what got them rewarded rather than what would create value. What were the specific incentives at play?]

The Expertise Gap

Most corporate innovation teams are staffed with smart generalists — strategy consultants, project managers, business developers. These are capable people, but they typically lack specific experience in venture building: customer discovery, rapid prototyping, assumption testing, build-measure-learn cycles.

The result: teams default to what they know. Strategy frameworks. Market analysis. Stakeholder management. These are valuable skills, but they're not the skills that build ventures. It's like staffing a kitchen with excellent accountants — they'll keep the books perfectly, but dinner isn't getting made.

The Risk Problem

Corporate innovation labs operate within a corporate risk framework designed for the core business. This framework makes sense for the core business — a failed product launch from the main business line has real consequences. But applying the same risk framework to a venture exploration that's testing a hypothesis with a two-week prototype is absurd.

The result: innovation teams spend more time getting approval to run a test than they spend running the test. The organizational immune system treats every experiment as a potential threat to the brand, when in reality, a failed prototype seen by twenty test users has zero impact on the company's reputation.

The Timeline Mismatch

Corporate planning cycles are annual. Venture building doesn't work on annual cycles. A venture might validate its core concept in six weeks, hit a wall at week ten, pivot, and find traction at week sixteen. Or it might be clearly dead by week four.

Annual budgets, annual reviews, and annual strategy cycles create pressure to show progress on a schedule that has nothing to do with the venture's actual trajectory. Teams stretch out work to fill the time allocated, or rush to show results before a review that's been scheduled regardless of the venture's readiness.

What Real Venture Building Looks Like

Let me describe what I've seen work — not in theory, but in practice, across multiple corporate environments.

Customer Evidence From Week One

Real venture building starts with customers, not with strategy. In the first week — not the first quarter, the first week — the team is having conversations with potential customers. Not surveys. Not focus groups. One-on-one conversations designed to understand the problem they're trying to solve.

These conversations are messy. They don't produce clean data. The first five interviews are usually wrong — wrong customer segment, wrong questions, wrong assumptions about what matters. That's fine. That's learning. By interview fifteen, the team has a fundamentally different understanding of the opportunity than they had on day one.

Assumptions, Not Ideas

Real venture building doesn't manage "ideas." It manages assumptions. Every venture is a collection of beliefs about customers, pricing, channels, feasibility, and timing. The job is to identify which of these beliefs are most critical and most uncertain, and then test them — in order of risk.

This is a fundamentally different mental model from the typical innovation funnel, where ideas are "developed" through stages of increasing fidelity. In an assumption-driven model, you might kill a beautiful idea in week two because you discover the core customer assumption doesn't hold. That's not failure. That's the system working.

[PERSONAL ANECDOTE: Describe a venture where the assumption-testing approach led to a quick kill or a surprising pivot. What was the initial idea? What assumption was tested? What did you learn?]

Time-Boxed Experiments, Not Open-Ended Projects

Every test has a deadline, a hypothesis, and a decision framework. "We believe that mid-market CFOs will pay EUR 200/month for automated cash flow forecasting. We'll test this by showing a landing page with pricing to 50 targeted CFOs over two weeks. If more than 5 request a demo, we continue. If fewer than 3, we revisit the segment or the pricing."

That's a testable experiment. Compare it to the typical innovation lab approach: "We'll explore the FinTech space and identify opportunities for the next quarter." One produces evidence. The other produces slides.

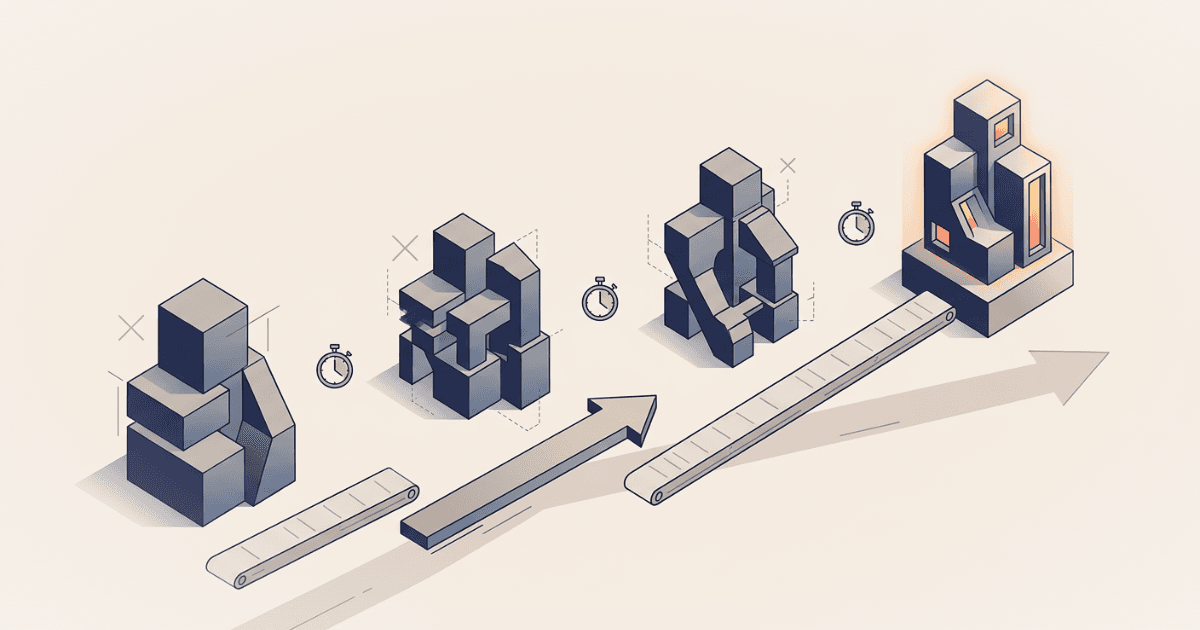

Build-Measure-Learn in Weeks, Not Quarters

A functional prototype should exist within the first three weeks of a venture exploration. Not a polished product. A working thing that lets a real user attempt a real task.

This prototype will be embarrassing. It should be. If it's not embarrassing, you spent too long building it. The point isn't to impress users — it's to learn from their behavior. Do they understand the value proposition? Can they complete the core task? Where do they get confused? What do they ask for that you didn't anticipate?

Decisions, Not Deferrals

Every two weeks, the team makes a decision. Continue with this direction. Pivot to an alternative hypothesis. Or stop — this isn't going to work, and continuing would be waste.

These decisions are based on evidence, not opinion. The team presents what they tested, what they learned, and what they recommend. Leadership's role is to ask hard questions and make the call — not to request more analysis as a way of avoiding a decision.

The Minimum Viable Innovation Program

If you're starting from scratch — or restarting after a failed innovation lab — here's the minimum structure that actually works.

1. A Clear Strategic Constraint

Don't tell the team to "find new opportunities." Tell them where to look. "We believe there's an opportunity to serve our existing customers with digital services in the maintenance space." That's a constraint that focuses effort without prescribing solutions.

The constraint should be broad enough to allow unexpected discoveries but narrow enough to prevent the team from spending three months deciding what market to explore.

2. A Dedicated Team With Autonomy

Minimum: two to three people, at least 80% dedicated. Not "innovation is part of their job alongside their regular responsibilities." Dedicated. With explicit authority to make decisions about experiments, customer conversations, and prototype development without committee approval.

This is where most corporate programs fail. They allocate 20% of five people's time and expect venture-building outcomes. That's not a venture team — that's a hobby.

3. A Decision Cadence

Every two weeks: a 30-minute review where the team presents evidence and recommendations. The audience is small — ideally two to three senior leaders who have the authority to say yes, no, or pivot. Not a steering committee of twelve. Not a monthly town hall. A focused decision meeting with people who can act.

4. A Budget That Matches the Stage

Early-stage venture exploration doesn't need a lot of money. It needs some money — for prototyping tools, customer incentives, potentially a fractional expert. But we're talking tens of thousands, not hundreds of thousands.

The budget conversation is often where innovation theater starts. A large budget creates pressure to spend it — which leads to hiring agencies, commissioning research, building elaborate prototypes. All of which slow down learning. Constraint breeds creativity. A small budget forces the team to be scrappy, which is exactly the behavior you want at this stage.

5. Explicit Kill Criteria

Before starting any venture exploration, define what failure looks like. "If we can't find five paying customers in the first six weeks, we stop." "If customer interviews reveal that the problem isn't in the top three priorities for our target segment, we pivot or stop."

Kill criteria do two things: they make the "stop" decision less political (the criteria were agreed upon in advance) and they prevent zombie ventures — initiatives that are clearly failing but persist because no one wants to be the person who pulls the plug.

[PERSONAL ANECDOTE: Describe a situation where having pre-agreed kill criteria made a difficult decision easier. What were the criteria? When were they triggered? How did the organization respond?]

Making the Switch

If you're currently running an innovation lab that looks more like theater than venture building, here's how to transition — without blowing everything up.

Step 1: Audit Honestly

Take your current portfolio of innovation activities and sort them into two buckets: "generating validated learning" and "generating internal visibility." Be ruthless. A hackathon that produced photos and enthusiasm but no follow-up action is visibility. A customer discovery sprint that produced ten interviews and a clear insight about customer willingness to pay is learning.

You'll probably find that 80% of your activities are in the visibility bucket. That's not a condemnation of your team — it's a reflection of the incentives they're operating under.

Step 2: Pick One Real Venture

Don't try to transform the whole program at once. Pick one venture concept — ideally one that has some early customer signal — and run it using the approach described above. Dedicated team, customer contact from week one, two-week decision cadence, time-boxed experiments.

This becomes your proof case. When it produces real learning (whether the venture succeeds or is killed with evidence), you have a concrete example to point to: "This is what real venture building looks like. This is what it costs. This is what it produces."

Step 3: Change the Metrics

Stop reporting inputs. Start reporting outcomes. The metrics that matter:

- Number of customer conversations conducted (leading indicator)

- Number of assumptions tested (leading indicator)

- Number of ventures validated (lagging indicator)

- Number of ventures killed with evidence (health indicator)

- Time from concept to first customer contact (speed indicator)

- Time from concept to go/no-go decision (efficiency indicator)

Present these alongside your old metrics for a quarter. The contrast will make the point more effectively than any argument.

Step 4: Change the Incentives

This is the hard part, and it requires executive sponsorship. The team needs to be rewarded for learning, not for activity. That means celebrating a well-evidenced kill decision as much as a successful launch. That means valuing a team that validated one concept in six weeks over a team that has twenty concepts "in the pipeline" with no evidence behind any of them.

[PERSONAL ANECDOTE: Describe a time when you saw an organization successfully shift from theater to real venture building. What changed? Who drove the change? What resistance did they face?]

Step 5: Be Patient, Then Be Impatient

Changing organizational behavior takes time. The first venture you run this way will feel uncomfortable. The team will want to do more research before talking to customers. Leadership will want more polish before showing a prototype. The two-week decision cadence will feel too fast.

Be patient with the discomfort. Be impatient with the results. If the new approach isn't producing faster, clearer learning within the first two months, something is wrong — and it's usually that the old incentives haven't actually changed.

The Bottom Line

Innovation theater persists because it's safe. It produces visible activity without requiring anyone to confront uncomfortable truths about what customers actually want, what the organization can actually execute, or which ideas should actually be killed.

Real venture building is uncomfortable. It produces evidence that challenges assumptions. It kills ideas that people are emotionally attached to. It moves faster than corporate planning cycles allow. It requires a kind of organizational honesty that most companies find deeply unnatural.

But it also produces real ventures. Not slide decks about ventures. Not innovation metrics that impress at town halls. Actual products and services that serve real customers and generate real revenue.

The choice isn't between innovation and no innovation. It's between innovation theater — which feels good and produces nothing — and real venture building, which feels uncomfortable and produces results.

Your innovation lab has nice furniture. The question is whether it's also building anything.

Want to discuss your innovation challenge?

Let's talk about how these ideas apply to your situation.

Schedule a Call